iOS Vision framework x WWDC 24 Discover Swift enhancements in the Vision framework Session

Vision framework Feature Review & iOS 18 New Swift API Hands-on Experience

Photo by BoliviaInteligente

Topic

The relationship with Vision Pro is like that between a hot dog and a dog—completely unrelated.

Vision framework

The Vision framework is Apple’s machine learning-based image recognition framework, enabling developers to easily and quickly implement common image recognition features. The Vision framework was first introduced in iOS 11.0+ (2017 / iPhone 8) and has been continuously updated and optimized. It has improved integration with Swift Concurrency to enhance performance, and starting from iOS 18.0, it offers a brand-new Swift Vision framework API to fully leverage Swift Concurrency.

Vision framework Features

-

Built-in Numerous Image Recognition and Motion Tracking Methods (31 Types as of iOS 18)

-

On-device computation uses only the phone’s chip, performing recognition without relying on cloud services, making it fast and secure.

-

API is simple and easy to use

-

Apple platforms support iOS 11.0+, iPadOS 11.0+, Mac Catalyst 13.0+, macOS 10.13+, tvOS 11.0+, visionOS 1.0+

-

Released for many years (2017–present) and continuously updated

-

Integrating Swift language features to enhance computational performance

Played with this 6 years ago: Vision Introduction — APP Avatar Upload Automatic Face Detection and Cropping (Swift)

This time, paired with the WWDC 24 Discover Swift enhancements in the Vision framework Session, I revisited and explored it again with the new Swift features.

CoreML

Apple also has another framework called CoreML, which is an on-device chip-based machine learning framework. It allows you to train your own object or document recognition models and embed them directly into your app. Interested users can give it a try. (e.g. real-time article classification, real-time spam detection …)

p.s.

Vision is mainly used for image analysis tasks such as face recognition, barcode detection, and text recognition. It provides powerful APIs to process and analyze visual content in static images or videos.

VisionKit : Specifically designed for handling document scanning tasks. It provides a scanner view controller to scan documents and generate high-quality PDFs or images.

The Vision framework cannot run on the simulator for M1 models and can only be tested on physical devices. Running it in the simulator environment throws a Could not create Espresso context error. Checking the official forum discussion, no solution was found.

Since I don’t have a physical iOS 18 device for testing, all results in this article are based on the old (pre-iOS 18) code; please leave a comment if there are any errors with the new syntax.

WWDC 2024 — Discover Swift enhancements in the Vision framework

Discover Swift enhancements in the Vision framework

This article is a summary of WWDC 24 — Discover Swift enhancements in the Vision framework session, along with some personal experiment notes.

Introduction — Vision framework Features

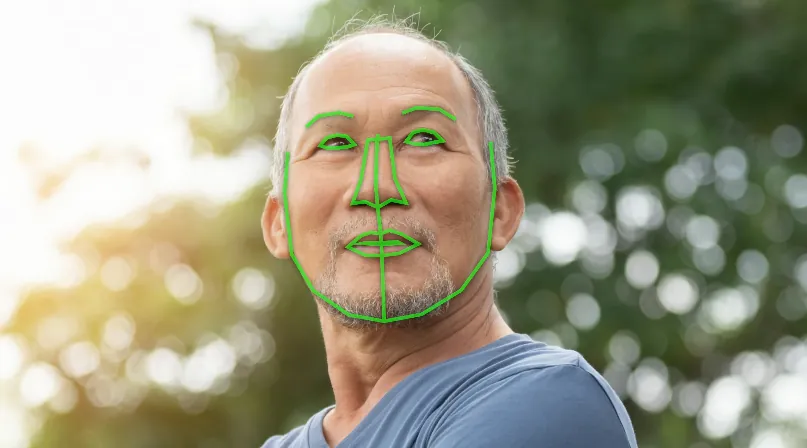

Face Recognition and Contour Detection

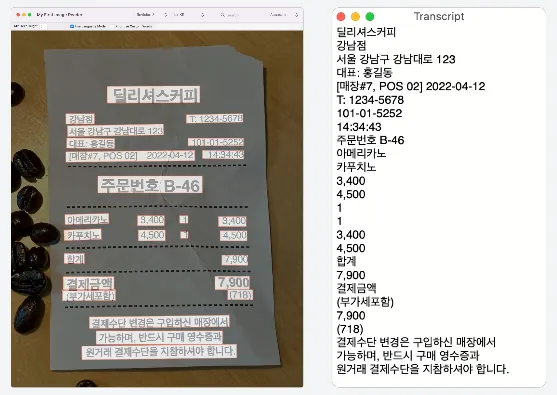

Text Recognition in Images

As of iOS 18, 18 languages are supported.

// List of supported languages

if #available(iOS 18.0, *) {

print(RecognizeTextRequest().supportedRecognitionLanguages.map { "\($0.languageCode!)-\(($0.region?.identifier ?? $0.script?.identifier)!)" })

} else {

print(try! VNRecognizeTextRequest().supportedRecognitionLanguages())

}

// The actual available recognition languages are based on this.

// Tested output on iOS 18:

// ["en-US", "fr-FR", "it-IT", "de-DE", "es-ES", "pt-BR", "zh-Hans", "zh-Hant", "yue-Hans", "yue-Hant", "ko-KR", "ja-JP", "ru-RU", "uk-UA", "th-TH", "vi-VT", "ar-SA", "ars-SA"]

// Swedish mentioned at WWDC was not seen; unclear if it’s not released yet or depends on device region/language settings

Dynamic Motion Capture

-

Can achieve dynamic tracking of people and objects

-

Gesture capture enables air signature functionality

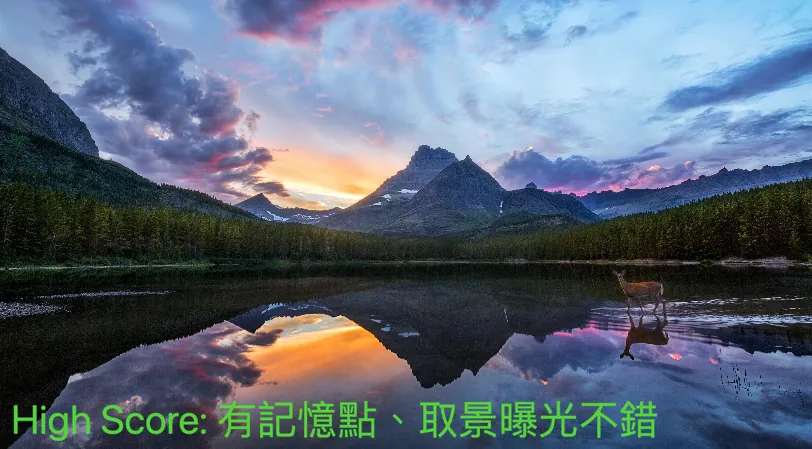

What’s new in Vision? (iOS 18)— Image scoring feature (quality, memorable points)

-

Can calculate a score for the input image, making it easy to filter high-quality photos

-

The scoring method includes multiple dimensions, not only image quality but also lighting, angle, subject, and whether it evokes memorable feelings, among others.

WWDC provided the above three images for explanation (with the same image quality), which are:

-

High-quality images: composition, lighting, and memorable elements

-

Low-quality images: No clear subject, like casual or accidental shots

-

Source image: Technically well-shot but lacks memorability, like images used for stock photo libraries.

iOS ≥ 18 New API: CalculateImageAestheticsScoresRequest

let request = CalculateImageAestheticsScoresRequest()

let result = try await request.perform(on: URL(string: "https://zhgchg.li/assets/cb65fd5ab770/1*yL3vI1ADzwlovctW5WQgJw.jpeg")!)

// Photo score

print(result.overallScore)

// Whether it is classified as a utility image

print(result.isUtility)

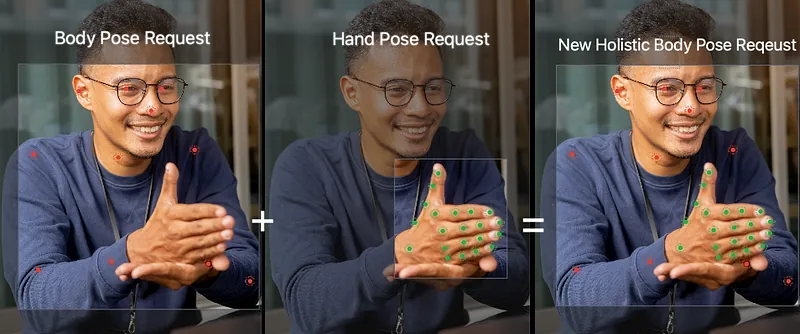

What’s new in Vision? (iOS 18) — Simultaneous Body and Hand Gesture Pose Detection

Previously, only body pose and hand pose could be detected separately. This update allows developers to detect body pose and hand pose simultaneously, combining them into a single request and result, making it easier to develop more applications.

iOS ≥ 18 New API: DetectHumanBodyPoseRequest

var request = DetectHumanBodyPoseRequest()

// Also detect hand poses

request.detectsHands = true

guard let bodyPose = try await request.perform(on: image).first else { return }

// Body pose joints

let bodyJoints = bodyPose.allJoints()

// Left hand pose joints

let leftHandJoints = bodyPose.leftHand.allJoints()

// Right hand pose joints

let rightHandJoints = bodyPose.rightHand.allJoints()

New Vision API

Apple has provided a new Swift Vision API wrapper for developers in this update. Besides supporting the original features, it mainly enhances Swift 6 / Swift Concurrency capabilities, offering more efficient and Swift-friendly API usage.

Get started with Vision

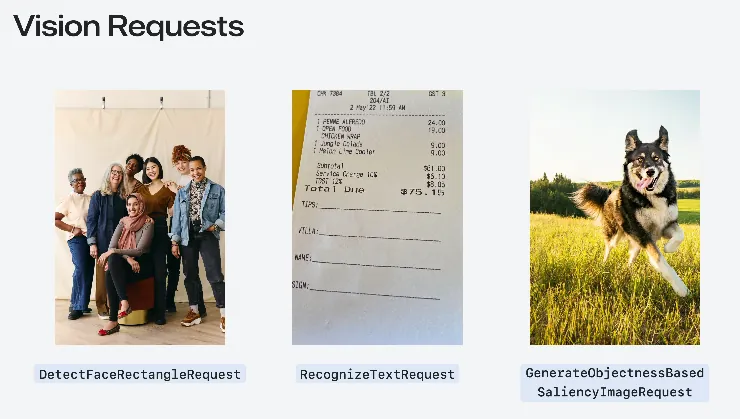

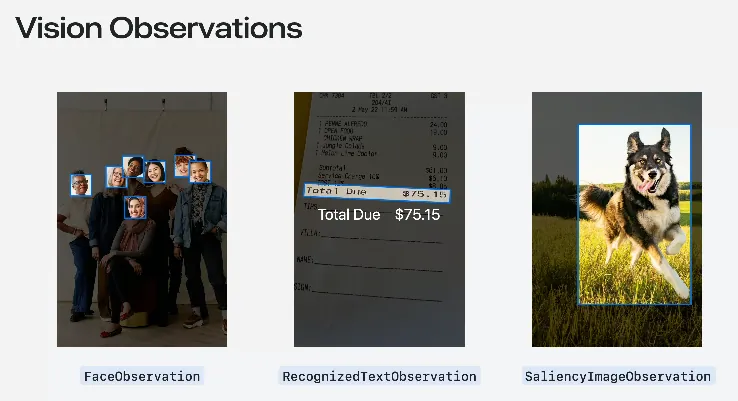

Here the speaker reintroduced the basic usage of the Vision framework. Apple has already packaged 31 types (as of iOS 18) of common image recognition requests called “Request” along with their corresponding returned “Observation” objects.

-

Request: DetectFaceRectanglesRequest - Face Rectangle Detection Request

Result: FaceObservation

The previous article “Vision Introduction — APP Profile Picture Upload Automatic Face Cropping (Swift)” used this request pair. -

Request: RecognizeTextRequest - Text Recognition Request

Result: RecognizedTextObservation -

Request: GenerateObjectnessBasedSaliencyImageRequest - Objectness-Based Saliency Request

Result: SaliencyImageObservation

All 31 Request Types:

\| Request Purpose \| Observation Description \|

\|———————————————–\|——————————————————————\|

\| CalculateImageAestheticsScoresRequest

Calculate the aesthetic score of an image. \| AestheticsObservation

Returns the aesthetic score of the image, including composition, color, and other factors. \|

\| ClassifyImageRequest

Classify image content. \| ClassificationObservation

Returns classification labels and confidence for objects or scenes in the image. \|

\| CoreMLRequest

Analyze image using a Core ML model. \| CoreMLFeatureValueObservation

Generates observations based on the Core ML model output. \|

\| DetectAnimalBodyPoseRequest

Detect animal poses in the image. \| RecognizedPointsObservation

Returns animal skeleton points and their positions. \|

\| DetectBarcodesRequest

Detect barcodes in the image. \| BarcodeObservation

Returns barcode data and type (e.g., QR code). \|

\| DetectContoursRequest

Detect contours in the image. \| ContoursObservation

Returns detected contour lines in the image. \|

\| DetectDocumentSegmentationRequest

Detect and segment documents in the image. \| RectangleObservation

Returns the rectangular boundary of the document. \|

\| DetectFaceCaptureQualityRequest

Evaluate face capture quality. \| FaceObservation

Returns quality assessment scores of the face image. \|

\| DetectFaceLandmarksRequest

Detect facial landmarks. \| FaceObservation

Returns detailed positions of facial features (e.g., eyes, nose). \|

\| DetectFaceRectanglesRequest

Detect faces in the image. \| FaceObservation

Returns bounding boxes of detected faces. \|

\| DetectHorizonRequest

Detect the horizon in the image. \| HorizonObservation

Returns the angle and position of the horizon. \|

\| DetectHumanBodyPose3DRequest

Detect 3D human body poses in the image. \| RecognizedPointsObservation

Returns 3D human skeleton points and spatial coordinates. \|

\| DetectHumanBodyPoseRequest

Detect human body poses in the image. \| RecognizedPointsObservation

Returns human skeleton points and their coordinates. \|

\| DetectHumanHandPoseRequest

Detect hand poses in the image. \| RecognizedPointsObservation

Returns hand skeleton points and their positions. \|

\| DetectHumanRectanglesRequest

Detect humans in the image. \| HumanObservation

Returns bounding boxes of detected humans. \|

\| DetectRectanglesRequest

Detect rectangles in the image. \| RectangleObservation

Returns coordinates of the four vertices of rectangles. \|

\| DetectTextRectanglesRequest

Detect text regions in the image. \| TextObservation

Returns positions and bounding boxes of text regions. \|

\| DetectTrajectoriesRequest

Detect and analyze object motion trajectories. \| TrajectoryObservation

Returns motion trajectory points and their time sequence. \|

\| GenerateAttentionBasedSaliencyImageRequest

Generate attention-based saliency image. \| SaliencyImageObservation

Returns saliency maps highlighting the most attractive areas of the image. \|

\| GenerateForegroundInstanceMaskRequest

Generate foreground instance mask image. \| InstanceMaskObservation

Returns masks of foreground objects. \|

\| GenerateImageFeaturePrintRequest

Generate image feature prints for comparison. \| FeaturePrintObservation

Returns feature print data of the image for similarity comparison. \|

\| GenerateObjectnessBasedSaliencyImageRequest

Generate objectness-based saliency image. \| SaliencyImageObservation

Returns saliency maps highlighting object regions. \|

\| GeneratePersonInstanceMaskRequest

Generate person instance mask image. \| InstanceMaskObservation

Returns masks of person instances. \|

\| GeneratePersonSegmentationRequest

Generate person segmentation image. \| SegmentationObservation

Returns binary masks for person segmentation. \|

\| RecognizeAnimalsRequest

Detect and identify animals in the image. \| RecognizedObjectObservation

Returns animal types and confidence levels. \|

\| RecognizeTextRequest

Detect and recognize text in the image. \| RecognizedTextObservation

Returns detected text content and its region positions. \|

\| TrackHomographicImageRegistrationRequest

Track homographic image registration. \| ImageAlignmentObservation

Returns homography transformation matrix between images for alignment. \|

\| TrackObjectRequest

Track objects in the image. \| DetectedObjectObservation

Returns object positions and velocity information in the image. \|

\| TrackOpticalFlowRequest

Track optical flow in the image. \| OpticalFlowObservation

Returns optical flow vector field describing pixel movements. \|

\| TrackRectangleRequest

Track rectangles in the image. \| RectangleObservation

Returns position, size, and rotation angle of rectangles in the image. \|

\| TrackTranslationalImageRegistrationRequest

Track translational image registration. \| ImageAlignmentObservation

Returns translation transformation matrix between images for alignment. \|

- Adding VN at the beginning is the old API style (versions before iOS 18).

The speaker mentioned several commonly used Requests, as follows.

ClassifyImageRequest

Identify the input image to obtain label classification and confidence.

![[Travelogue] Second Visit to Kyushu in 2024: 9-Day Independent Trip, Entering via Busan→Hakata Cruise](/assets/755509180ca8/1*f1rNoOIQbE33M9F9NmoTXg.webp)

[Travelogue] Second Visit to Kyushu in 2024: 9-Day Independent Trip, Entering via Busan → Hakata Cruise

if #available(iOS 18.0, *) {

// New API using Swift features

let request = ClassifyImageRequest()

Task {

do {

let observations = try await request.perform(on: URL(string: "https://zhgchg.li/assets/cb65fd5ab770/1*yL3vI1ADzwlovctW5WQgJw.jpeg")!)

observations.forEach {

observation in

print("\(observation.identifier): \(observation.confidence)")

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let completionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNClassificationObservation] else {

return

}

observations.forEach {

observation in

print("\(observation.identifier): \(observation.confidence)")

}

}

let request = VNClassifyImageRequest(completionHandler: completionHandler)

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: URL(string: "https://zhgchg.li/assets/cb65fd5ab770/1*3_jdrLurFuUfNdW4BJaRww.jpeg")!, options: [:])

do {

try handler.perform([request])

}

catch {

print("Request failed: \(error)")

}

}

}

Analysis Results:

• outdoor: 0.75392926

• sky: 0.75392926

• blue_sky: 0.7519531

• machine: 0.6958008

• cloudy: 0.26538086

• structure: 0.15728651

• sign: 0.14224191

• fence: 0.118652344

• banner: 0.0793457

• material: 0.075975396

• plant: 0.054406323

• foliage: 0.05029297

• light: 0.048126098

• lamppost: 0.048095703

• billboards: 0.040039062

• art: 0.03977703

• branch: 0.03930664

• decoration: 0.036868922

• flag: 0.036865234

....etc

RecognizeTextRequest

Recognize the text content in images. (a.k.a. Image to Text)

![[[Travelogue] 5-Day Free Trip to Tokyo 2023](/posts/travel-journals/tokyo-5-day-travel-guide-top-tips-for-food-stay-transport-9da2c51fa4f2/)](/assets/755509180ca8/1*XL40lLT774PfO60rCIfnxA.webp)

[Travelogue] 5-Day Free Trip to Tokyo 2023

if #available(iOS 18.0, *) {

// New API using Swift features

var request = RecognizeTextRequest()

request.recognitionLevel = .accurate

request.recognitionLanguages = [.init(identifier: "ja-JP"), .init(identifier: "en-US")] // Specify language code, e.g., Traditional Chinese

Task {

do {

let observations = try await request.perform(on: URL(string: "https://zhgchg.li/assets/9da2c51fa4f2/1*fBbNbDepYioQ-3-0XUkF6Q.jpeg")!)

observations.forEach {

observation in

let topCandidate = observation.topCandidates(1).first

print(topCandidate?.string ?? "No text recognized")

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let completionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNRecognizedTextObservation] else {

return

}

observations.forEach {

observation in

let topCandidate = observation.topCandidates(1).first

print(topCandidate?.string ?? "No text recognized")

}

}

let request = VNRecognizeTextRequest(completionHandler: completionHandler)

request.recognitionLevel = .accurate

request.recognitionLanguages = ["ja-JP", "en-US"] // Specify language code, e.g., Traditional Chinese

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: URL(string: "https://zhgchg.li/assets/9da2c51fa4f2/1*fBbNbDepYioQ-3-0XUkF6Q.jpeg")!, options: [:])

do {

try handler.perform([request])

}

catch {

print("Request failed: \(error)")

}

}

}

Analysis Results:

LE LABO Aoyama Store

TEL:03-6419-7167

*Thank you for your purchase*

No: 21347

Date: 2023/06/10 14.14.57

Staff:

1690370

Register: 008A 1

Product Name

Price incl. tax Quantity Total incl. tax

Caiac 10 EDP FB 15ML

J1P7010000S

16,800

16,800

Another 13 EDP FB 15ML

J1PJ010000S

10,700

10,700

Lip Palm 15ML

JOWC010000S

2,000

1

Total Amount

(Tax included)

CARD

2,000

3 items purchased

29,500

0

29,500

29,500

DetectBarcodesRequest

Detect barcode and QR code data in images.

Thai Locals Recommend Goose Brand Cooling Balm

let filePath = Bundle.main.path(forResource: "IMG_6777", ofType: "png")! // Local test image

let fileURL = URL(filePath: filePath)

if #available(iOS 18.0, *) {

// New API using Swift features

let request = DetectBarcodesRequest()

Task {

do {

let observations = try await request.perform(on: fileURL)

observations.forEach {

observation in

print("Payload: \(observation.payloadString ?? "No payload")")

print("Symbology: \(observation.symbology)")

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let completionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNBarcodeObservation] else {

return

}

observations.forEach {

observation in

print("Payload: \(observation.payloadStringValue ?? "No payload")")

print("Symbology: \(observation.symbology.rawValue)")

}

}

let request = VNDetectBarcodesRequest(completionHandler: completionHandler)

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: fileURL, options: [:])

do {

try handler.perform([request])

}

catch {

print("Request failed: \(error)")

}

}

}

Analysis Results:

Payload: 8859126000911

Symbology: VNBarcodeSymbologyEAN13

Payload: https://lin.ee/hGynbVM

Symbology: VNBarcodeSymbologyQR

Payload: http://www.hongthaipanich.com/

Symbology: VNBarcodeSymbologyQR

Payload: https://www.facebook.com/qr?id=100063856061714

Symbology: VNBarcodeSymbologyQR

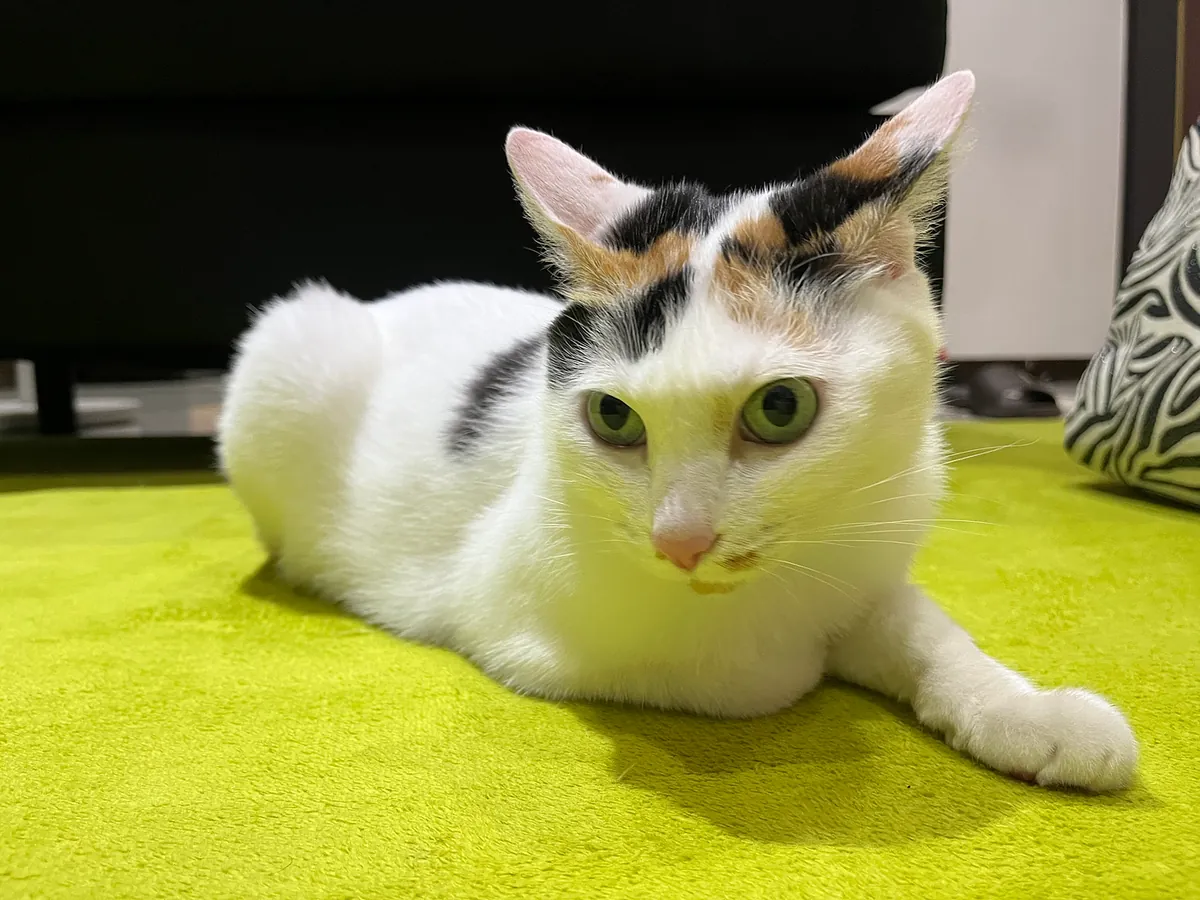

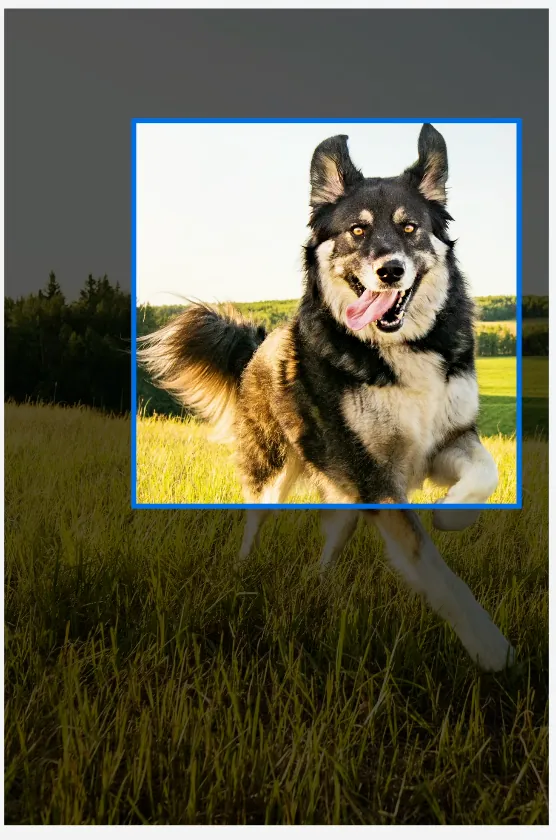

RecognizeAnimalsRequest

Recognize animals in the image along with confidence levels.

let filePath = Bundle.main.path(forResource: "IMG_5026", ofType: "png")! // Local test image

let fileURL = URL(filePath: filePath)

if #available(iOS 18.0, *) {

// New API using Swift features

let request = RecognizeAnimalsRequest()

Task {

do {

let observations = try await request.perform(on: fileURL)

observations.forEach {

observation in

let labels = observation.labels

labels.forEach {

label in

print("Detected animal: \(label.identifier) with confidence: \(label.confidence)")

}

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let completionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNRecognizedObjectObservation] else {

return

}

observations.forEach {

observation in

let labels = observation.labels

labels.forEach {

label in

print("Detected animal: \(label.identifier) with confidence: \(label.confidence)")

}

}

}

let request = VNRecognizeAnimalsRequest(completionHandler: completionHandler)

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: fileURL, options: [:])

do {

try handler.perform([request])

}

catch {

print("Request failed: \(error)")

}

}

}

Analysis results:

Detected animal: Cat with confidence: 0.77245045

Others:

-

Detecting Human Figures in Images: DetectHumanRectanglesRequest

-

Detecting poses of humans and animals (both 3D and 2D): DetectAnimalBodyPoseRequest, DetectHumanBodyPose3DRequest, DetectHumanBodyPoseRequest, DetectHumanHandPoseRequest

-

Detect and track the motion path of objects (across different frames in videos or animations): DetectTrajectoriesRequest, TrackObjectRequest, TrackRectangleRequest

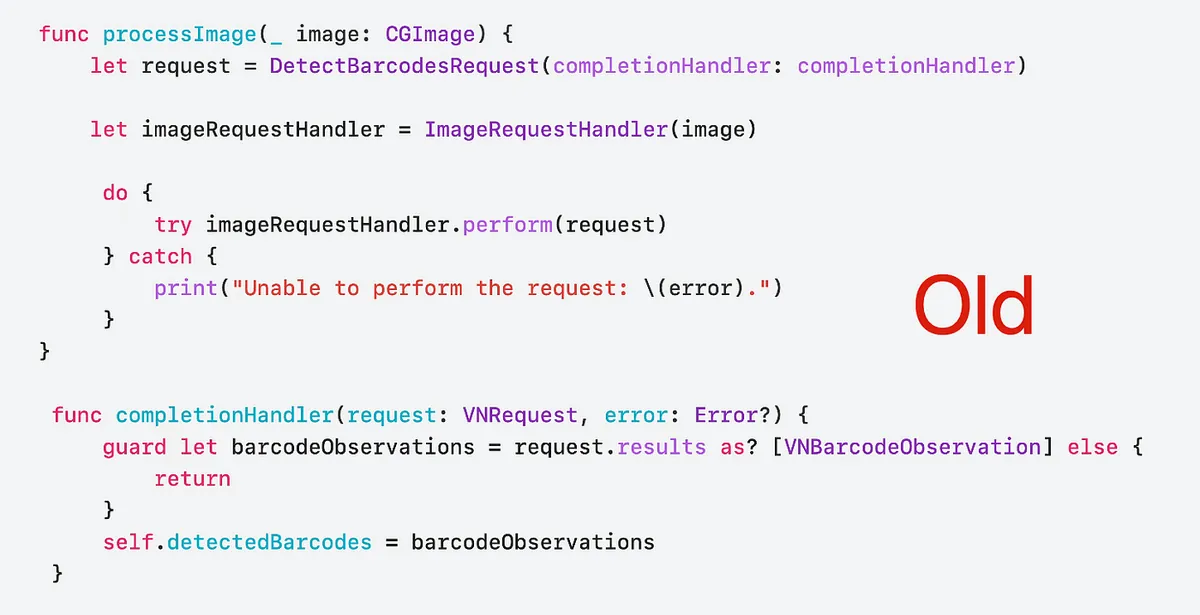

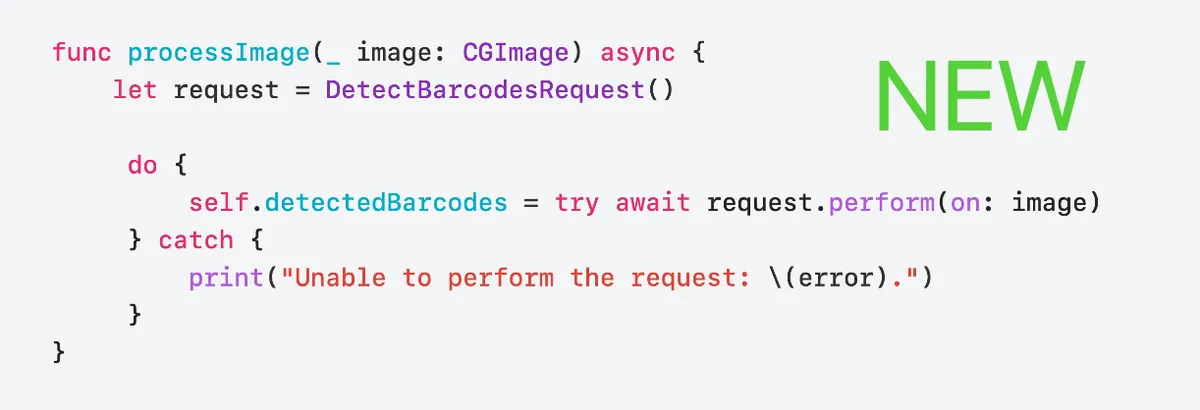

iOS ≥ 18 Update Highlight:

VN*Request -> *Request (e.g. VNDetectBarcodesRequest -> DetectBarcodesRequest)

VN*Observation -> *Observation (e.g. VNRecognizedObjectObservation -> RecognizedObjectObservation)

VNRequestCompletionHandler -> async/await

VNImageRequestHandler.perform([VN*Request]) -> *Request.perform()

WWDC Example

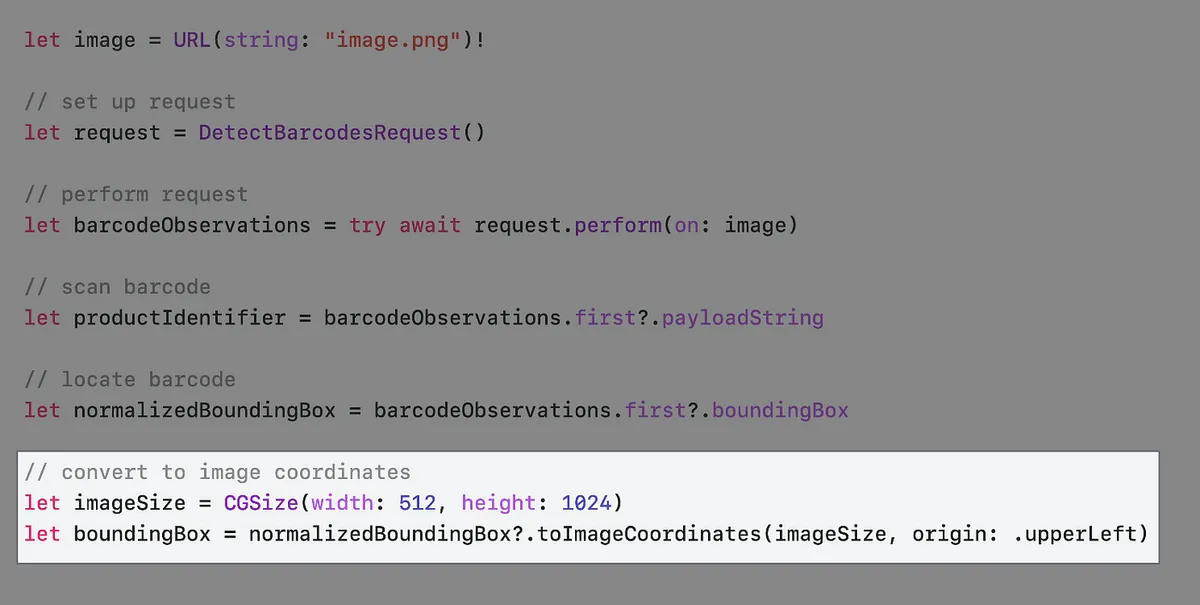

The official WWDC video uses a supermarket product scanner as an example.

First, most products have barcodes available for scanning

We can get the barcode location from observation.boundingBox, but unlike the common UIView coordinate system, the boundingBox origin is at the bottom-left corner, with values ranging between 0 and 1.

let filePath = Bundle.main.path(forResource: "IMG_6785", ofType: "png")! // Local test image

let fileURL = URL(filePath: filePath)

if #available(iOS 18.0, *) {

// New API using Swift features

var request = DetectBarcodesRequest()

request.symbologies = [.ean13] // Specify if only scanning EAN13 Barcode to improve performance

Task {

do {

let observations = try await request.perform(on: fileURL)

if let observation = observations.first {

DispatchQueue.main.async {

self.infoLabel.text = observation.payloadString

// Mark color layer

let colorLayer = CALayer()

// New coordinate conversion API toImageCoordinates in iOS >=18

// Untested, actual calculation may need ContentMode = AspectFit offset:

colorLayer.frame = observation.boundingBox.toImageCoordinates(self.baseImageView.frame.size, origin: .upperLeft)

colorLayer.backgroundColor = UIColor.red.withAlphaComponent(0.5).cgColor

self.baseImageView.layer.addSublayer(colorLayer)

}

print("BoundingBox: \(observation.boundingBox.cgRect)")

print("Payload: \(observation.payloadString ?? "No payload")")

print("Symbology: \(observation.symbology)")

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let completionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNBarcodeObservation] else {

return

}

if let observation = observations.first {

DispatchQueue.main.async {

self.infoLabel.text = observation.payloadStringValue

// Mark color layer

let colorLayer = CALayer()

colorLayer.frame = self.convertBoundingBox(observation.boundingBox, to: self.baseImageView)

colorLayer.backgroundColor = UIColor.red.withAlphaComponent(0.5).cgColor

self.baseImageView.layer.addSublayer(colorLayer)

}

print("BoundingBox: \(observation.boundingBox)")

print("Payload: \(observation.payloadStringValue ?? "No payload")")

print("Symbology: \(observation.symbology.rawValue)")

}

}

let request = VNDetectBarcodesRequest(completionHandler: completionHandler)

request.symbologies = [.ean13] // Specify if only scanning EAN13 Barcode to improve performance

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: fileURL, options: [:])

do {

try handler.perform([request])

}

catch {

print("Request failed: \(error)")

}

}

}

iOS ≥ 18 Update Highlight:

// New coordinate conversion API toImageCoordinates in iOS >=18

observation.boundingBox.toImageCoordinates(CGSize, origin: .upperLeft)

// https://developer.apple.com/documentation/vision/normalizedpoint/toimagecoordinates(from:imagesize:origin:)

Helper:

// Gen by ChatGPT 4o

// Since the photo in ImageView has ContentMode = AspectFit

// We need to calculate the vertical offset caused by the fit blank space

func convertBoundingBox(_ boundingBox: CGRect, to view: UIImageView) -> CGRect {

guard let image = view.image else {

return .zero

}

let imageSize = image.size

let viewSize = view.bounds.size

let imageRatio = imageSize.width / imageSize.height

let viewRatio = viewSize.width / viewSize.height

var scaleFactor: CGFloat

var offsetX: CGFloat = 0

var offsetY: CGFloat = 0

if imageRatio > viewRatio {

// Image fits width-wise

scaleFactor = viewSize.width / imageSize.width

offsetY = (viewSize.height - imageSize.height * scaleFactor) / 2

}

else {

// Image fits height-wise

scaleFactor = viewSize.height / imageSize.height

offsetX = (viewSize.width - imageSize.width * scaleFactor) / 2

}

let x = boundingBox.minX * imageSize.width * scaleFactor + offsetX

let y = (1 - boundingBox.maxY) * imageSize.height * scaleFactor + offsetY

let width = boundingBox.width * imageSize.width * scaleFactor

let height = boundingBox.height * imageSize.height * scaleFactor

return CGRect(x: x, y: y, width: width, height: height)

}

Output Result

BoundingBox: (0.5295758928571429, 0.21408638121589782, 0.0943080357142857, 0.21254415360708087)

Payload: 4710018183805

Symbology: VNBarcodeSymbologyEAN13

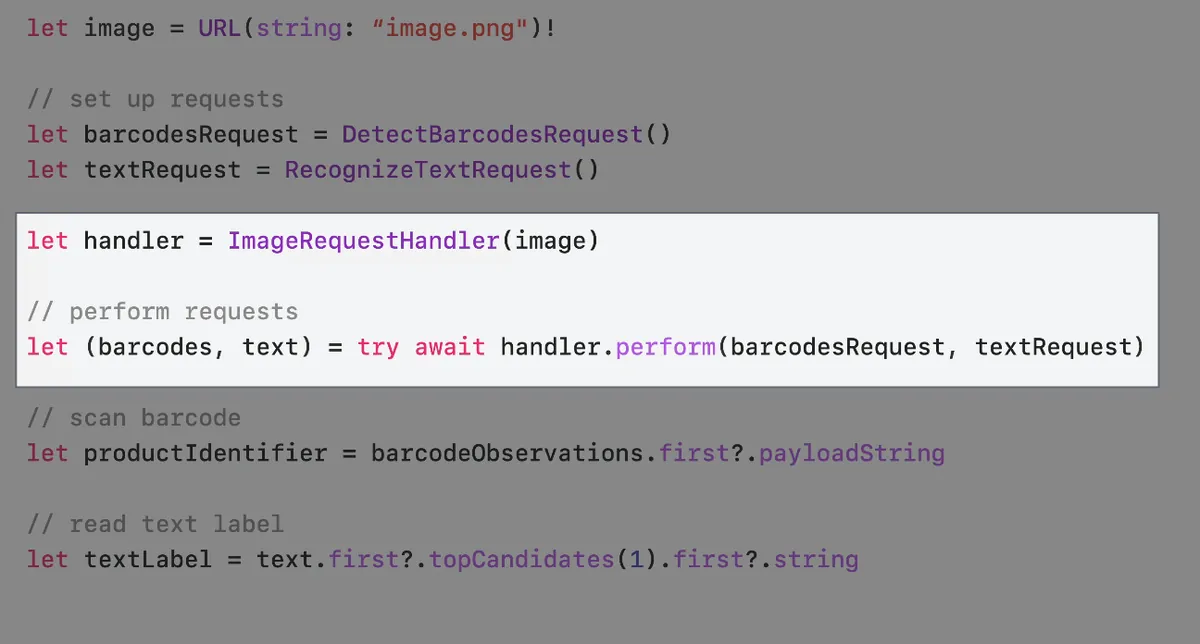

Some products have no barcode, such as loose fruits with only product labels

Therefore, our scanner also needs to support scanning plain text labels simultaneously.

let filePath = Bundle.main.path(forResource: "apple", ofType: "jpg")! // Local test image

let fileURL = URL(filePath: filePath)

if #available(iOS 18.0, *) {

// New API using Swift features

var barcodesRequest = DetectBarcodesRequest()

barcodesRequest.symbologies = [.ean13] // Specify EAN13 barcode only to improve performance

var textRequest = RecognizeTextRequest()

textRequest.recognitionLanguages = [.init(identifier: "zh-Hnat"), .init(identifier: "en-US")]

Task {

do {

let handler = ImageRequestHandler(fileURL)

// parameter pack syntax and we must wait for all requests to finish before we can use their results.

// let (barcodesObservation, textObservation, ...) = try await handler.perform(barcodesRequest, textRequest, ...)

let (barcodesObservation, textObservation) = try await handler.perform(barcodesRequest, textRequest)

if let observation = barcodesObservation.first {

DispatchQueue.main.async {

self.infoLabel.text = observation.payloadString

// Mark color layer

let colorLayer = CALayer()

// iOS >=18 new coordinate conversion API toImageCoordinates

// Untested, may still require calculation for ContentMode = AspectFit offset:

colorLayer.frame = observation.boundingBox.toImageCoordinates(self.baseImageView.frame.size, origin: .upperLeft)

colorLayer.backgroundColor = UIColor.red.withAlphaComponent(0.5).cgColor

self.baseImageView.layer.addSublayer(colorLayer)

}

print("BoundingBox: \(observation.boundingBox.cgRect)")

print("Payload: \(observation.payloadString ?? "No payload")")

print("Symbology: \(observation.symbology)")

}

textObservation.forEach {

observation in

let topCandidate = observation.topCandidates(1).first

print(topCandidate?.string ?? "No text recognized")

}

}

catch {

print("Request failed: \(error)")

}

}

} else {

// Old approach

let barcodesCompletionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNBarcodeObservation] else {

return

}

if let observation = observations.first {

DispatchQueue.main.async {

self.infoLabel.text = observation.payloadStringValue

// Mark color layer

let colorLayer = CALayer()

colorLayer.frame = self.convertBoundingBox(observation.boundingBox, to: self.baseImageView)

colorLayer.backgroundColor = UIColor.red.withAlphaComponent(0.5).cgColor

self.baseImageView.layer.addSublayer(colorLayer)

}

print("BoundingBox: \(observation.boundingBox)")

print("Payload: \(observation.payloadStringValue ?? "No payload")")

print("Symbology: \(observation.symbology.rawValue)")

}

}

let textCompletionHandler: VNRequestCompletionHandler = {

request, error in

guard error == nil else {

print("Request failed: \(String(describing: error))")

return

}

guard let observations = request.results as? [VNRecognizedTextObservation] else {

return

}

observations.forEach {

observation in

let topCandidate = observation.topCandidates(1).first

print(topCandidate?.string ?? "No text recognized")

}

}

let barcodesRequest = VNDetectBarcodesRequest(completionHandler: barcodesCompletionHandler)

barcodesRequest.symbologies = [.ean13] // Specify EAN13 barcode only to improve performance

let textRequest = VNRecognizeTextRequest(completionHandler: textCompletionHandler)

textRequest.recognitionLevel = .accurate

textRequest.recognitionLanguages = ["en-US"]

DispatchQueue.global().async {

let handler = VNImageRequestHandler(url: fileURL, options: [:])

do {

try handler.perform([barcodesRequest, textRequest])

}

catch {

print("Request failed: \(error)")

}

}

}

Output:

94128s

ORGANIC

Pink Lady®

Produce of USh

iOS ≥ 18 Update Highlight:

let handler = ImageRequestHandler(fileURL)

// parameter pack syntax and we must wait for all requests to finish before we can use their results.

// let (barcodesObservation, textObservation, ...) = try await handler.perform(barcodesRequest, textRequest, ...)

let (barcodesObservation, textObservation) = try await handler.perform(barcodesRequest, textRequest)

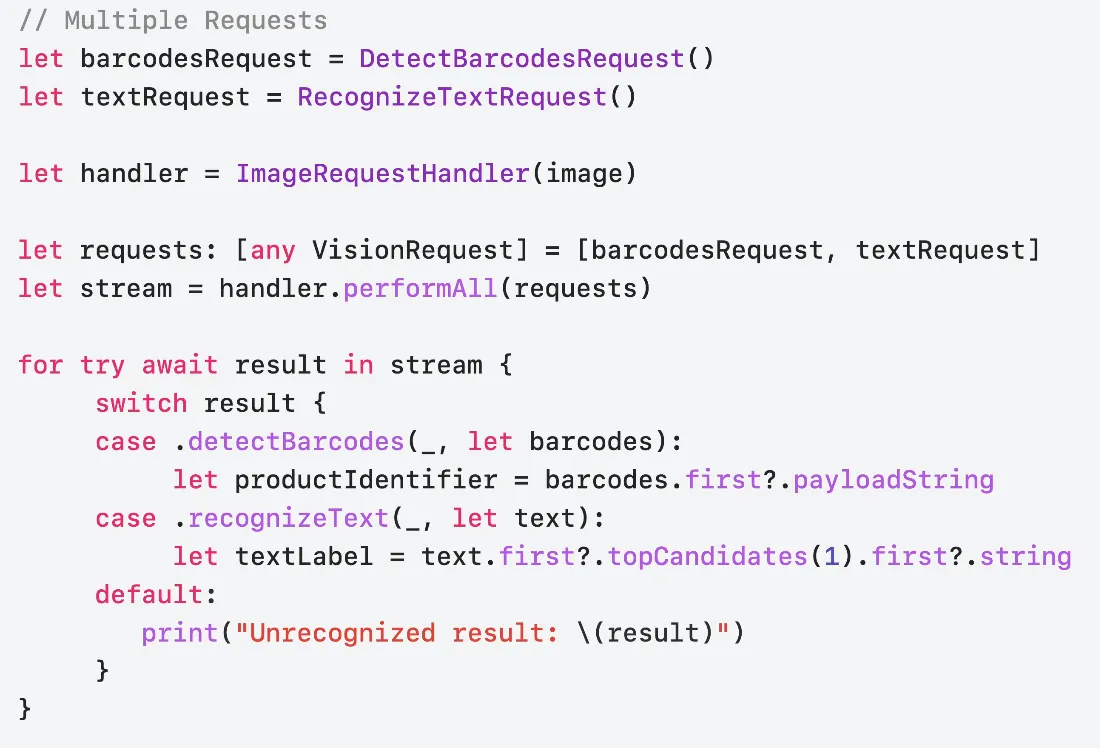

iOS ≥ 18 performAll()?changes=latest_minor){:target=”_blank”} method

The previous perform(barcodesRequest, textRequest) method waits for both requests to complete before continuing; starting with iOS 18, the new performAll() method streams responses, allowing you to handle each request result as soon as it arrives—for example, responding immediately when a barcode is detected.

if #available(iOS 18.0, *) {

// New API using Swift features

var barcodesRequest = DetectBarcodesRequest()

barcodesRequest.symbologies = [.ean13] // Specify EAN13 barcode only to improve performance

var textRequest = RecognizeTextRequest()

textRequest.recognitionLanguages = [.init(identifier: "zh-Hnat"), .init(identifier: "en-US")]

Task {

let handler = ImageRequestHandler(fileURL)

let observation = handler.performAll([barcodesRequest, textRequest] as [any VisionRequest])

for try await result in observation {

switch result {

case .detectBarcodes(_, let barcodesObservation):

if let observation = barcodesObservation.first {

DispatchQueue.main.async {

self.infoLabel.text = observation.payloadString

// Color marking layer

let colorLayer = CALayer()

// iOS >=18 new coordinate conversion API toImageCoordinates

// Untested, may still need to calculate ContentMode = AspectFit offset:

colorLayer.frame = observation.boundingBox.toImageCoordinates(self.baseImageView.frame.size, origin: .upperLeft)

colorLayer.backgroundColor = UIColor.red.withAlphaComponent(0.5).cgColor

self.baseImageView.layer.addSublayer(colorLayer)

}

print("BoundingBox: \(observation.boundingBox.cgRect)")

print("Payload: \(observation.payloadString ?? "No payload")")

print("Symbology: \(observation.symbology)")

}

case .recognizeText(_, let textObservation):

textObservation.forEach {

observation in

let topCandidate = observation.topCandidates(1).first

print(topCandidate?.string ?? "No text recognized")

}

default:

print("Unrecognized result: \(result)")

}

}

}

}

Optimize with Swift Concurrency

Suppose we have a photo wall list, and each image needs automatic cropping to focus on the main subject; in this case, Swift Concurrency can be used to improve loading efficiency.

Original Implementation

func generateThumbnail(url: URL) async throws -> UIImage {

let request = GenerateAttentionBasedSaliencyImageRequest()

let saliencyObservation = try await request.perform(on: url)

return cropImage(url, to: saliencyObservation.salientObjects)

}

func generateAllThumbnails() async throws {

for image in images {

image.thumbnail = try await generateThumbnail(url: image.url)

}

}

Running one at a time results in slow efficiency and performance.

Optimization (1) — TaskGroup Concurrency

func generateAllThumbnails() async throws {

try await withThrowingDiscardingTaskGroup { taskGroup in

for image in images {

image.thumbnail = try await generateThumbnail(url: image.url)

}

}

}

Add each Task into a TaskGroup for concurrency execution.

Issue: Image recognition and screenshot operations consume a lot of memory. Adding unlimited concurrent tasks may cause user lag and out-of-memory crashes.

Optimization (2) — TaskGroup Concurrency + Limiting Concurrency Count

func generateAllThumbnails() async throws {

try await withThrowingDiscardingTaskGroup {

taskGroup in

// Maximum number of concurrent tasks should not exceed 5

let maxImageTasks = min(5, images.count)

// Initially fill 5 tasks

for index in 0..<maxImageTasks {

taskGroup.addTask {

image[index].thumbnail = try await generateThumbnail(url: image[index].url)

}

}

var nextIndex = maxImageTasks

for try await _ in taskGroup {

// When a task in the taskGroup completes...

// Check if the index has reached the end

if nextIndex < images.count {

let image = images[nextIndex]

// Continue adding tasks one by one (maintaining a max of 5)

taskGroup.addTask {

image.thumbnail = try await generateThumbnail(url: image.url)

}

nextIndex += 1

}

}

}

}

Update an existing Vision app

-

Vision will remove CPU and GPU support for some requests on devices with a Neural Engine. On these devices, the Neural Engine offers the best performance.

You can check this using thesupportedComputeDevices()API. -

Remove all VN prefixes

VNXXRequest,VNXXXObservation->Request,Observation -

Use async/await to replace the original VNRequestCompletionHandler

-

Use

*Request.perform()directly instead of the originalVNImageRequestHandler.perform([VN*Request])

Wrap-up

-

APIs Newly Designed for Swift Language Features

-

New features and methods are Swift-only, available on iOS ≥ 18

-

New Image Aesthetic Scoring Feature, Body + Hand Gesture Tracking

Thanks!

KKday Recruitment Advertisement

👉👉👉This study group sharing comes from the weekly technical sharing sessions within the KKday App Team. The team is currently enthusiastically recruiting a Senior iOS Engineer. Interested candidates are welcome to apply. 👈👈👈

References

Discover Swift enhancements in the Vision framework

The Vision Framework API has been redesigned to leverage modern Swift features like concurrency, making it easier and faster to integrate a wide array of Vision algorithms into your app. We’ll tour the updated API and share sample code, along with best practices, to help you get the benefits of this framework with less coding effort. We’ll also demonstrate two new features: image aesthetics and holistic body pose.

Chapters

-

0:00 — Introduction

-

1:07 — New Vision API

-

1:47 — Get started with Vision

-

11:05 — Update an existing Vision app

-

13:46 — What’s new in Vision?

Vision framework Apple Developer Documentation

-

Comments